前回都合により、Anthropic の方がビデオ登壇になってしまいましたが、AWS Summit Japanのために来日の際に再度Anthropic Claude nightを開催されました。

Anthropic キーノート&デモ

@YoMaggieVo @alexalbert__

Hopefully, everyone can hear me from back there. I'm going to move this a little bit. Okay. Okay. Um, okay. Okay, great. Uh,

Um, this because, um,

Uh, to begin with. Um, Uh, I'm here with also Alex, Albert, who is the head of our developer relations, um, the head over to developer relations, uh, motions right now, and he will be speaking with later.

So I'm sure many of you have heard of anthropics before, so I will go directly into quad 3. I'm talking about our models. Today, we'll be talking about both vision and Tool, use mostly

To begin with. Here's an overview of the claw 3 model family. It consists of three large models. And the first one is uh Opus which is our largest most capable model. Then we also have sonnet, which is our middle, balanced model, and then we have haiku, which is our fastest and smallest model,

And you can see it in the chart on the left, that they're also spread across different cost tubes, and so, when you select a model, it's important to think about the kind of the model that can do, all the things you need it to do. Without, you know, breaking the bank and being exactly the price point that works for you.

A brief overview of the things that Claude can do. I think can be broken into three major categories, the the Category is about analysis. So all three models in the cloud 3 family are incredibly capable at high level, extremely nuanced analysis. On things like analyzing large sets of documents.

Large sets of emails, different databases of both code and text and so on.

The second major category of what plot is capable of is in the area of content generation and just general knowledge. So Claude is able to do things like help you generate marketing, copy and other types of business content, As well as be a really effective chat bot for things like product support or just helping you brainstorm your ideas or how to do something better.

Claude's a really good assistant to just help you think through things.

And the last area which uh, we think is the most exciting area going forward, is in the area of automation. So, with tool use, you're able to start automating end-to-end workflows so that Claude can do a series of tasks without you having to handle every step of the process.

We believe that this is going to be kind of more increasingly important for businesses and startups, going forward and more of a way that people use models going forward as well.

Claw 3 is able to do this with a series of improvements from previous models.

The first areas are that. It's just faster all around. So you're able to do things like chain a bunch of tools without having it hurt your latency, as much as it would if you were using older models,

And it's also much more steerable. Meaning you can do a lot more with little prompting or little prompting optimization. You can write shorter prompts to get more performance in Behavior than before.

Furthermore. Um, Claude is also much more accurate and trustworthy than previous generations meaning, for example, that Claude can Utilize more accurately information all across the context window.

We've also worked to further lower hallucination rates and to generally make you make the model both more trustworthy in terms of its performance as well, as give you tools like better citations to be able to understand and verify what the model is doing.

Then lastly excitingly all of the models in the cloud3 family have Vision capabilities, meaning that now Claude can understand images as well as text.

Going forward, I'm going to talk a little bit about our vision capabilities. Now what you can do with it as well, so there is An overview is that our vision capabilities have been specifically trained for business, type use cases. Meaning that it understands charts graphs. Technical diagrams, the kinds of things you would see a lot in business settings.

De Masco cono casanisms.

Um, let's if you can see the series on the right. There's a bunch of examples of us, kind of what this means, Claude is able to understand the conditions of items for insurance purposes or it's able to extract data from charts and graphs and then write code about analyzing that information.

It's even able to transcribe handwriting

We're very excited and grateful to have a close relationship with Amazon in Japan, especially meaning that we've been able to get feedback on our models and how well our models perform in Japanese.

That means that we do understand that our current models have some gaps. There is like claudice Claude, 3 as it curly is is not able to read um you know, extremely messy Japanese handwriting for example or um you know certain kanji that are really rare and older and so we're working on making that better for future models.

I'm going to give you a few tips right now on how to properly use Vision. Uh, these are the three main ones that I think will help you a lot.

The first one is to put your images right at the top just like you would with documents with Claude you should put your images at the top before your instructions before your query so that Claude can have that image as context to understand the rest of your instructions.

You should also label your images. So here I've labeled them just image one through four in my example but whatever label you give it this allows you to better reference it in your instructions the different images as well as gives Clauda understanding of what the order of these images or maybe even what those images are if you name them.

And then in general for images that contain information, you may want to make it a two-step process where your first step is just to ask Claude to extract the information. So the numbers from the charts or You know, certain Things that it can see in the image before asking it to do a task with that information.

So now I'm going to show you a video of an example of how Claude is able to extract. A lot of information at very fast speeds from documents that are messy. And some of the, the images are, you know, hard to understand and hard to read.

I think. Do I just play and step aside?

Okay, go ahead. I have no introduction. Just in English. Do I play it with sound or no. Uh, can you reduce the sound? Yes, but it'll be in English. Okay, let me Go back for a second.

Make it up. Please play with English. Okay. Do I pause for them to translate? Okay. In the world, to demonstrate this, we're going to read through thousands of scanned, documents in a matter of minutes. The Library of Congress Federal writers project is a collection of thousands of scanned transcripts from interviews during the Great Depression.

This is a gold, mine of incredible narratives and real life Heroes, but it's locked away in hard to access scans and transcripts. Imagine you're a documentary filmmaker or journalist, how can you dig through these thousands of messy documents to find the best source material for your research without reading them all yourself?

Since these documents are scanned images, we can't feed them into a text on the llm. And these scans are messy enough that they'd be a challenge for most dedicated OCR software. But luckily Haiku is natively Vision capable and can use surrounding text to tempt transcribe these images and really understand what's going on.

We can also go beyond simple transcription for each interview and ask Haiku to generate structured, Json output, with metadata like title date keywords, but also use some creativity and judgment to assess how compelling a documentary the story and characters would be We can process each document in parallel for performance and with Claude's high availability API, do that at massive, scale for hundreds or thousands of documents.

Let's take a look at some of that structured output. Haiku was able to not just transcribe but pull out creative things like keywords. We've transformed this collection of many, many scams into Rich. Keyword structured data. Imagine to put any organization with a knowledge base of scanned documents like a traditional publisher Healthcare provider or Law Firm can do Haiku can parse their extensive archives and bodies of work.

We'd love for you to try it out and see what we built. So, in that demo, you can see that Haiku especially can read documents extremely quickly. This video is slower than how fast Haiku is now, where now we make demos? Now we have to slow down the video just to see it properly generated.

Some other examples I want to show you. Here is an image of a whiteboard where the text is in cursive. It's hard to understand and read. And the image itself is also on a very reflective surface. Esto cochina.

I'm asking you to transcribe that content into, uh, Json, schema. And I'm not giving it this game. I'm asking it to come up with a schema that makes sense. Given the information

And you can see that as a result, Claude not only transcribes, it perfectly even the capital letters Uh, but also that Claude is able to pick a Json. Schema that makes sense with the information architecture of the Whiteboard.

The coolest part of this I think is that Claude is able to pull out the very last line of both sides of the Whiteboard. To understand that it's not part of the list above it. So in the Json schema which will give you these slides so you can see it for yourself.

Claude is also separating that from the rest of the list in its results.

I have another demo. I wanted to show you where I want to get to full use in time. So I'm going to show you just the last part of it again. I'll give you these slides so you can see them later.

So the previous slides, I asked Claude to just analyze the picture of this shoe and to come up with information within a given Json schema.

But in this example I am not giving Claude a Json schema up again I'm asking Claude to just write out everything that it can confidently infer from just looking at the image alone. I'm testing cloths, analytical capabilities.

And you can see that in the results, Claude is able to pull out the brand of the shoe, the colors, but also things like the materials and how it has a reflective kind of silver, detail in the back, for example.

Claude is even able to tell that the shoe is made for running on Trails and what kind of terrain such as gravel and dirt.

So I'm excited to see what you guys can do with vision. I think it's, you know, a state of the art in many ways and one of the most Capability. Unlocking areas of the cloth 3 family. Acadero.

So now let's talk about agents and tool use

For those of you who don't know what agents are, it looks a little bit like this.

Quad three especially has really improved agentic capabilities from previous models.

So in this example I have you can see that given a specific user request such as asking for reimbursement for blood pressure medication. Claude is able to analyze the user's goals and then complete a series of tasks that ends in either giving the reimbursement or saying something else that helps the user further along.

You can see that in the list of tasks that Claude is completing there are things like pulling the customer records and running drug interaction. Safety checks, these are all things that Claude can do with toy use. Que con company.

So you can create agent workflows with tool, use and Tool use is really simple under the hood.

It basically consists of a user prompt or a question as well as a list of tools that you've given Claude. And the tools need to just have a name, a description of what the tool is and then all the parameters that you would need to run that tool. De company.

And then you gave all that to Claude. And Claude is able to analyze the question. And then choose the right tool for the situation. So in my example, my question is, what is the final score of the yomiyori Giants game on June 16th 2024 just two days ago.

I'm asking this question because toll use is a really good way to update Clause information when you need to find out information after the training date cut off. So Claude was not trained two days ago. Meaning Claude doesn't actually know this information.

And God is able to in the on the right side of the slide, you can see that quad would pick not only the right tool but also be able to extract the various parameters of the information from the various parameters such as the name of the team as well as the date of the game.

Here's another example, that shows you what you need to do to have successful tool use

In this example, I have three different tools to look up in inventory information, to generate an invoice or to send an email.

And all of these are attached to various functions in your code. So looking up an inventory requires that you query a database or sending an email requires that you connect to an email API.

Claude doesn't have any tools of its own and it doesn't run any of these tools. So you have to write all these functions, and you also have to run them on Claude's behalf.

This allows you much finer control over the types of tools that you create instead of relying on, you know, any simple ones that we might make that aren't fulfilling your specific needs and use cases.

In my next example, I'm going to show you a detailed walkthrough of What it looks like when you call a tool and return the information to Claude,

I'm gonna begin by having a user. Ask a question, like, how many shares of General Motors can I buy with 500? This information is not available in Cloud's trading data, because stock information changes all the time. De Coco de no news.

Paul looks at that question and it knows it has a gen as get stock price tool that we've given it and so it's going to ask you to call the tool. That's what Claude is outputting.

Then when you get information, it's your job to Caught your uh query the stock market API with the information that Claude asked you for and then get that information back and then you can send to Claudid in the next part of the conversation. Um, the results of the tool call.

And then Claude is able to use that information in order to generate an answer for the user. In this case, doing some simple math, to tell the user that they can buy about 11 shares of General Motors at this time with 500.

So you might also know that there's something called agents for Amazon Bedrock, and I want to tell you a little bit about what that is and how that's different and similar to tool used directly with Claude.

So, in the middle, you can see the things that they have in common. The first being well, the series of them being that you can Define the tools. Claude is still able to request what tools to use and you still have to run those tools on your side uh and provide the information to close.

You're also able to see Claude's train of thought as to why it chose the tool and all that in both. The agents frames on Bedrock as well as tool used with anthropic directly.

Then separately outside of that. You have the ability to do Json mode. Which is when you have clogged return, every single tool call that Claude asks you for, Claude will give you the information in a Json schema. So you can use this to your advantage as a, you know, Surefire way to return Json information in Json mode.

You can also force toll use meaning that you can require a claw to definitely use a tool, either a specific tool or one that you gave it from a list of tools.

These more advanced features are capable because with using tool use with anthropic, you're able to see every single step of the process which allows you to take advantage of Json mode or Force tool use.

On top of this, there is agents frames on Bedrock. And what agents does is it builds a layer on top of tool use to abstract away The tool use syntax and structure, which allows you to get started a lot more quickly and easily. But also, you'll have a little bit less finer control over the process.

So with all this being said, I have a few demos to show you and then we can close out the keynote.

And uh, the first one is about a very simple way to use Two used with Claude for customer support.

Mero der, son lyrics.

Then the next demo, I'm going to show you. Is. Using a claw 3 to search the web and find a Find the, the fastest, uh, algorithm of a certain type using orchestration of haiku

Should I know. I don't want you to know it's scary, told you one of this. Sub agent orchestration is when you have a more capable model like, Opus, Call on and write prompts for. A smaller model like haikune in this case is going to do that a hundred times in parallel.

De gusta es me con la es.

So the last time I want to show you is, how you can do this with vision as well.

In this demo, we're given Claude some tools to browse the web. Um, as well as to run the code that it writes and the ability to call on some sub agents. So in this case, Opus will call on haiku

Res Más.

I think the coolest part of this demo is how if you give Claude the ability to run and understand the code that it can uh reason with why it made the mistake and then fix the code and make it better without you having to debug it yourself.

So I have a bunch of useful resources that I will leave you with and we will be notice that and I will give you the slides so that you can see all the different resources here as to how you can. Also, uh, Kind of do more exciting and more advanced work with Claude.

The state on a home. And, With that. Thank you. Thank you so much.NotebookLMに作成してもらいました。

クロード3プレゼンテーションの概要と要点

本資料は、Anthropic社による「クロード3」プレゼンテーションの内容を日本語でまとめたものです。

主なテーマ:

- クロード3モデルファミリーの概要

- クロード3の新機能と強化点

- 画像認識機能

- エージェントとツール使用

- Amazon Bedrock エージェントとの比較

重要なアイデア・事実:

クロード3モデルファミリー

- Opus: 最大規模で最も高性能なモデル

- Sonnet: バランスの取れた中規模モデル

- Haiku: 最速かつ最小のモデル

各モデルは異なるコスト帯で提供され、ユースケースや予算に合わせて選択可能。

クロード3の新機能と強化点

- 高速化: 複数ツールの連続使用時のレイテンシを改善

- 操縦性の向上: 短いプロンプトでより高いパフォーマンスと動作を実現

- 精度と信頼性の向上: コンテキストウィンドウ全体の情報を利用、ハルシネーション率の低下、引用機能の強化

- 画像認識機能: チャート、グラフ、技術図などビジネスユースケースに特化した画像認識機能を搭載

画像認識機能

- ビジネス文書に含まれるチャート、グラフ、技術図などを理解

- アイテムの状態の理解、チャートからのデータ抽出、手書き文字の書き起こしなどが可能

- 日本語の認識機能は発展途上であり、今後の改善に期待

画像認識機能の効果的な使い方

- 画像を指示やクエリの前に配置

- 画像にラベルを付ける

- 情報を含む画像の場合は、まず情報を抽出してからタスクを指示

エージェントとツール使用

- エージェントは、ユーザーの要求を分析し、一連のタスクを実行することで目標達成を支援

- ツール使用により、エージェントワークフローを作成可能

- クロードはツールを使用せず、ユーザーがツールを提供し、実行する必要がある

ツール使用の成功のためのポイント

- ツール名、説明、パラメータを定義

- ツールの実行とクロードへの情報提供

Amazon Bedrock エージェントとの比較

- 共通点: ツールの定義、クロードによるツールリクエスト、ユーザー側でのツール実行

- クロード独自の機能: JSONモード、ツール使用の強制

- Bedrock エージェント: ツール使用構文を抽象化し、容易な利用を実現

デモ

- 顧客サポートのためのシンプルなツール使用

- Web検索と高速アルゴリズム探索のためのオーケストレーション

- 画像認識とコード実行を組み合わせたデモ

注目すべき引用:

- “Claude is also much more accurate and trustworthy than previous generations meaning, for example, that Claude can Utilize more accurately information all across the context window.”

- “We believe that this is going to be kind of more increasingly important for businesses and startups, going forward and more of a way that people use models going forward as well.”

- “The coolest part of this I think is that Claude is able to pull out the very last line of both sides of the Whiteboard. To understand that it’s not part of the list above it.”

- “Claude doesn’t have any tools of its own and it doesn’t run any of these tools. So you have to write all these functions, and you also have to run them on Claude’s behalf.”

- “I think the coolest part of this demo is how if you give Claude the ability to run and understand the code that it can uh reason with why it made the mistake and then fix the code and make it better without you having to debug it yourself.”

まとめ

クロード3は、高速化、精度向上、画像認識機能、ツール使用の強化など、多くの点で進化したLLMです。ビジネスユースケースに最適化されており、今後ますます多くの企業やスタートアップで活用されることが期待されます。

画像を扱う場合のプロンプトのチップス

https://docs.anthropic.com/en/docs/build-with-claude/vision#example-one-image

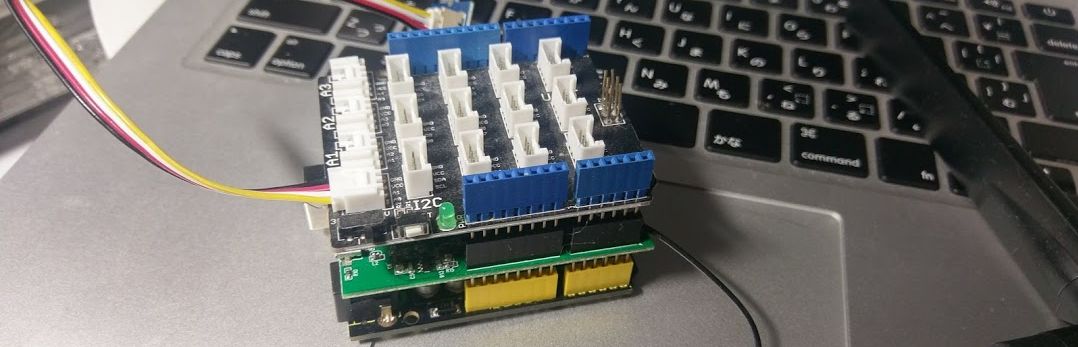

LT① Claude3 on Bedrock(with Converse API + Tool use)でチャットアプリを作成してみた

@recat_125

LT② AWS構成図からCloudFormationとパラメータシートを自動生成するシステムを作ってみた

@tsukuboshi0755

LT③ LangChain Agent with RetrieverをBedrock(Claude3 Opus)で実装してみる

@meitante1conan

LT④ AWS BedrockでLLMOps / 評価をどう行うのか?

@ry0_kaga

その他

AWS Summit Day1で今日Anthropicで話されたMeggle VoがAWS SAと相談できるセッションがあるようです。